SITUATION ASSESSMENT

In February 2022, the Stanford Internet Observatory documented a sophisticated coordinated inauthentic behavior campaign targeting European audiences during the early weeks of Russia’s invasion of Ukraine. The operation deployed over 300 fabricated social media accounts across Facebook, Twitter, and Telegram, systematically amplifying narratives that Ukraine’s government had abandoned Kyiv and that Western military aid was being diverted to black markets.

This case exemplifies why developing robust influence operation methodology has become a critical intelligence discipline. Open-source evidence indicates that modern influence campaigns operate at unprecedented scale and sophistication, requiring systematic analytical frameworks to detect, analyze, and counter their effects.

THREAT VECTOR: Understanding Influence Operation Architecture

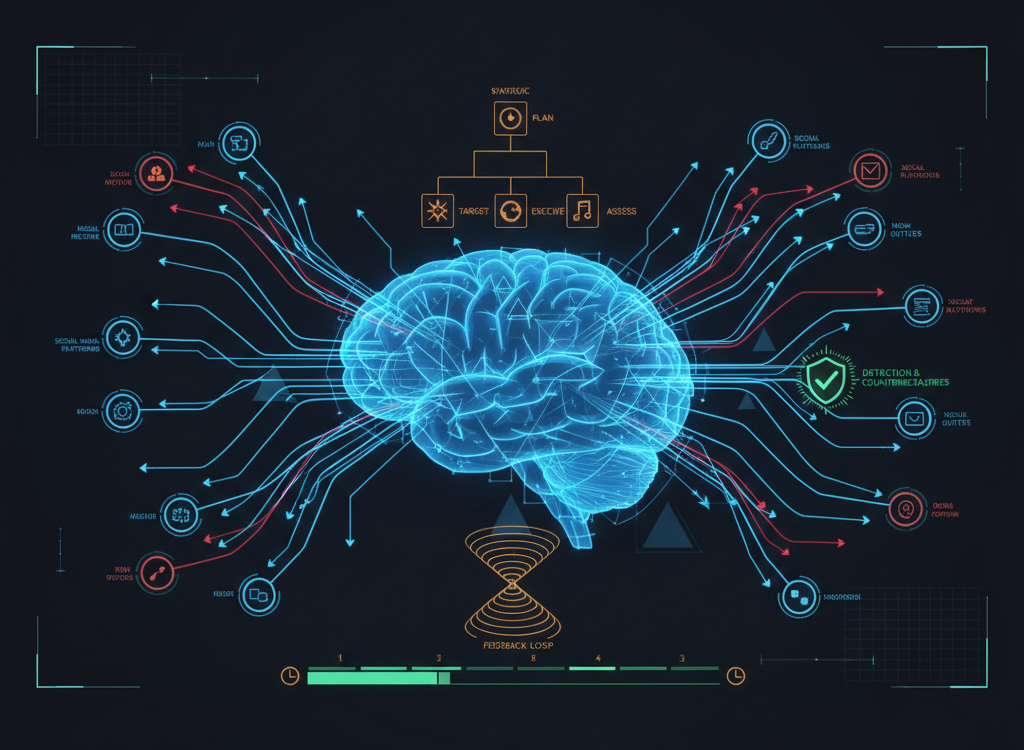

Contemporary influence operations function as integrated information warfare systems designed to manipulate perception, behavior, and decision-making across target populations. The NATO Cognitive Warfare concept, formalized in 2021, defines these operations as attacks on the «sixth domain» of warfare—the human mind itself.

A comprehensive influence operation methodology must account for what RAND Corporation researchers Christopher Paul and Miriam Matthews identified in their seminal 2016 study as the «Firehose of Falsehood» model. This framework reveals how modern disinformation campaigns achieve impact through high-volume, multi-channel, rapid, and continuous messaging that overwhelms target audiences’ cognitive defenses.

Assessment: Influence operations exploit fundamental cognitive vulnerabilities identified by Nobel laureate Daniel Kahneman’s dual-process theory—the tendency for System 1 (fast, intuitive) thinking to override System 2 (slow, analytical) processing when individuals encounter emotionally charged or time-pressured information.

The operational pattern suggests that successful campaigns typically integrate three core components: content manipulation (crafted narratives designed to exploit cognitive biases), amplification infrastructure (networks of authentic and inauthentic accounts), and targeting precision (demographic and psychographic audience segmentation).

OPERATIONAL CASE STUDY: Documented Campaign Analysis

The «Ghostwriter» campaign, attributed by Mandiant researchers to Belarusian intelligence services, provides a textbook example of systematic influence operation deployment. Active from 2017 to 2022, Ghostwriter targeted NATO member states through a coordinated sequence of cyber intrusions, content manipulation, and narrative amplification.

Bellingcat’s investigation revealed the operation’s methodology: hackers would compromise legitimate news websites and social media accounts, inject fabricated content that appeared authentic, then use networks of amplifier accounts to spread the manipulated information across platforms. The campaign specifically targeted Lithuanian, Latvian, and Polish audiences with narratives designed to undermine confidence in NATO security guarantees.

A second case study involves the Internet Research Agency’s documented tactics during the 2016 U.S. election cycle. The DFRLab’s comprehensive analysis demonstrated how operatives conducted extensive audience research, created culturally authentic personas, and deployed A/B testing to optimize message resonance across demographic segments.

This aligns with documented TTPs for professional influence operations: systematic audience analysis, persona development, content testing, and performance optimization using engagement metrics as feedback loops.

DETECTION PROTOCOL: Identifying Active Operations

A critical indicator of organized influence campaigns is the presence of coordinated inauthentic behavior patterns that deviate from organic social media activity. The following detection indicators form the foundation of systematic influence operation methodology:

Technical Signatures:

- Account clustering: Multiple profiles sharing creation timestamps, similar biographical information, or synchronized activity patterns

- Amplification anomalies: Content receiving disproportionate engagement relative to follower count or historical performance

- Cross-platform coordination: Identical or near-identical content appearing simultaneously across multiple social media platforms

- Timing patterns: Posts and interactions occurring in regular intervals suggesting automation or coordinated human activity

- Network analysis markers: Artificial clustering in follower networks, mutual follows, and engagement patterns

Content Signatures:

- Narrative convergence: Multiple sources promoting identical talking points or framing without apparent organic coordination

- Emotional manipulation: Content designed to trigger strong emotional responses, particularly anger, fear, or outrage

- Polarization amplification: Messages that artificially heighten existing social, political, or cultural divisions

- Source laundering: Attribution of fabricated quotes, statistics, or events to legitimate institutions or individuals

DEFENSE FRAMEWORK: Multi-Layer Countermeasures

Effective defense against influence operations requires coordinated responses across individual, organizational, and systemic levels. The EU DisinfoLab’s 2023 assessment emphasizes that successful countermeasures must address both technical detection and cognitive resilience building.

Individual-Level Defenses:

- Cognitive hygiene practices: Implement systematic fact-checking protocols before sharing information, particularly content that triggers strong emotional responses

- Source verification habits: Cross-reference claims across multiple independent, credible sources before accepting information as factual

- Platform literacy: Understand social media algorithms’ role in content curation and actively seek diverse information sources

- Emotional regulation: Recognize when content is designed to provoke immediate sharing and pause to evaluate accuracy

Organizational Protocols:

- Employee training programs: Regular briefings on current influence operation tactics and detection methodologies

- Information verification systems: Mandatory multi-source confirmation for sensitive or potentially viral information

- Incident response procedures: Clear escalation paths and communication protocols when influence operations are detected

- Partnership networks: Coordination with fact-checking organizations, cybersecurity firms, and government agencies

Systemic Countermeasures:

Platform-level interventions include algorithmic adjustments to reduce artificial amplification, account verification systems, and transparency requirements for political advertising. International cooperation frameworks like the EU’s Code of Practice on Disinformation provide coordinated policy responses to cross-border influence operations.

Open-source evidence indicates that the most effective countermeasures combine technical detection capabilities with human analytical expertise, as automated systems alone cannot reliably distinguish between authentic grassroots activity and coordinated manipulation.

ASSESSMENT: Key Intelligence Takeaways

Analysis of documented influence operations reveals several critical patterns that inform defensive methodology:

- Scale and sophistication are increasing: Modern campaigns deploy hundreds of accounts, sophisticated targeting algorithms, and professional content creation capabilities

- Cross-platform coordination is standard: Effective operations simultaneously target multiple social media platforms, traditional media, and messaging applications

- Timing exploitation is systematic: Campaigns are designed to amplify during crisis periods, elections, or other moments of heightened social tension

- Detection requires human expertise: While automated tools provide valuable initial screening, human analysts remain essential for definitive identification and attribution

- Defensive effectiveness requires coordination: Individual and organizational countermeasures must be supported by platform policies and international cooperation frameworks

Forward-looking assessment: The operational environment suggests that influence operations will continue evolving in complexity and scale. Emerging technologies including artificial intelligence-generated content and deepfakes will likely be integrated into future campaigns. Defensive methodologies must therefore emphasize adaptability, cross-sector cooperation, and continuous capability development to maintain effectiveness against evolving threats.

The development of robust influence operation methodology represents a critical component of modern information security. Organizations and individuals who implement systematic detection and defense frameworks will be significantly better positioned to maintain cognitive autonomy in an increasingly complex information environment.